1. Overview

1.概述

Docker is one of the most popular container engines used in the software industry to create, package and deploy applications.

Docker是软件行业中最受欢迎的容器引擎之一,用于创建、打包和部署应用程序。

In this tutorial, we’ll learn how to do an Apache Kafka setup using Docker.

在本教程中,我们将学习如何使用Docker进行Apache Kafka>设置。

2. Single Node Setup

2.单一节点设置

A single node Kafka broker setup would meet most of the local development needs, so let’s start by learning this simple setup.

一个单节点的Kafka代理设置可以满足大部分的本地开发需求,所以我们先来学习一下这个简单的设置。

2.1. docker-compose.yml Configuration

2.1.docker-compose.yml配置

To start an Apache Kafka server, we’d first need to start a Zookeeper server.

要启动Apache Kafka服务器,我们首先需要启动一个Zookeeper服务器。

We can configure this dependency in a docker-compose.yml file, which will ensure that the Zookeeper server always starts before the Kafka server and stops after it.

我们可以在docker-compose.yml文件中配置这一依赖关系,这将确保Zookeeper服务器总是在Kafka服务器之前启动,在它之后停止。

Let’s create a simple docker-compose.yml file with two services, namely zookeeper and kafka:

让我们创建一个简单的docker-compose.yml文件,其中有两个服务,即zookeeper和kafka。

version: '2'

services:

zookeeper:

image: confluentinc/cp-zookeeper:latest

environment:

ZOOKEEPER_CLIENT_PORT: 2181

ZOOKEEPER_TICK_TIME: 2000

ports:

- 22181:2181

kafka:

image: confluentinc/cp-kafka:latest

depends_on:

- zookeeper

ports:

- 29092:29092

environment:

KAFKA_BROKER_ID: 1

KAFKA_ZOOKEEPER_CONNECT: zookeeper:2181

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://kafka:9092,PLAINTEXT_HOST://localhost:29092

KAFKA_LISTENER_SECURITY_PROTOCOL_MAP: PLAINTEXT:PLAINTEXT,PLAINTEXT_HOST:PLAINTEXT

KAFKA_INTER_BROKER_LISTENER_NAME: PLAINTEXT

KAFKA_OFFSETS_TOPIC_REPLICATION_FACTOR: 1In this setup, our Zookeeper server is listening on port=2181 for the kafka service, which is defined within the same container setup. However, for any client running on the host, it’ll be exposed on port 22181.

在这个设置中,我们的Zookeeper服务器在端口=2181上监听kafka服务,它被定义在同一个容器设置中。然而,对于在主机上运行的任何客户端,它将暴露在端口22181上。

Similarly, the kafka service is exposed to the host applications through port 29092, but it is actually advertised on port 9092 within the container environment configured by the KAFKA_ADVERTISED_LISTENERS property.

同样,kafka服务通过端口29092暴露给主机应用程序,但它实际上是在容器环境中通过KAFKA_ADVERTISED_LISTENERS属性配置的端口9092上公布。

2.2. Start Kafka Server

2.2.启动Kafka服务器

Let’s start the Kafka server by spinning up the containers using the docker-compose command:

让我们通过使用docker-compose命令来启动Kafka服务器。

$ docker-compose up -d

Creating network "kafka_default" with the default driver

Creating kafka_zookeeper_1 ... done

Creating kafka_kafka_1 ... doneNow let’s use the nc command to verify that both the servers are listening on the respective ports:

现在让我们使用nc命令来验证两个服务器是否在各自的端口上进行监听。

$ nc -z localhost 22181

Connection to localhost port 22181 [tcp/*] succeeded!

$ nc -z localhost 29092

Connection to localhost port 29092 [tcp/*] succeeded!Additionally, we can also check the verbose logs while the containers are starting up and verify that the Kafka server is up:

此外,我们还可以在容器启动时检查verbose日志,验证Kafka服务器是否已经启动。

$ docker-compose logs kafka | grep -i started

kafka_1 | [2021-04-10 22:57:40,413] DEBUG [ReplicaStateMachine controllerId=1] Started replica state machine with initial state -> HashMap() (kafka.controller.ZkReplicaStateMachine)

kafka_1 | [2021-04-10 22:57:40,418] DEBUG [PartitionStateMachine controllerId=1] Started partition state machine with initial state -> HashMap() (kafka.controller.ZkPartitionStateMachine)

kafka_1 | [2021-04-10 22:57:40,447] INFO [SocketServer brokerId=1] Started data-plane acceptor and processor(s) for endpoint : ListenerName(PLAINTEXT) (kafka.network.SocketServer)

kafka_1 | [2021-04-10 22:57:40,448] INFO [SocketServer brokerId=1] Started socket server acceptors and processors (kafka.network.SocketServer)

kafka_1 | [2021-04-10 22:57:40,458] INFO [KafkaServer id=1] started (kafka.server.KafkaServer)With that, our Kafka setup is ready for use.

这样,我们的Kafka设置就可以使用了。

2.3. Connection Using Kafka Tool

2.3.使用Kafka工具进行连接

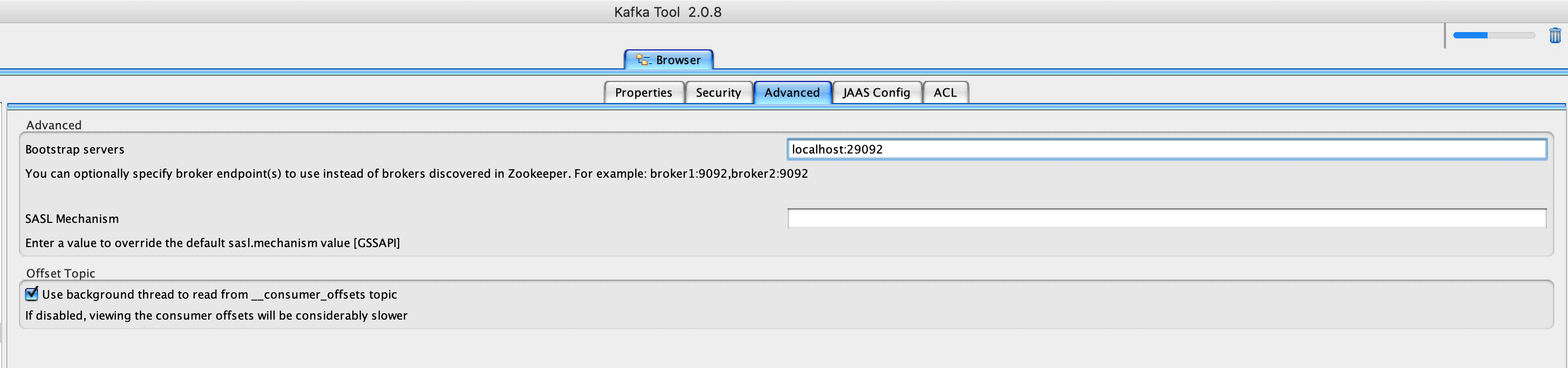

Finally, let’s use the Kafka Tool GUI utility to establish a connection with our newly created Kafka server, and later, we’ll visualize this setup:

最后,让我们使用Kafka工具GUI工具与我们新创建的Kafka服务器建立连接,稍后,我们将对这个设置进行可视化。

We must note that we need to use the Bootstrap servers property to connect to the Kafka server listening at port 29092 for the host machine.

我们必须注意,我们需要使用Bootstrap servers属性来连接到主机的Kafka服务器监听端口29092。

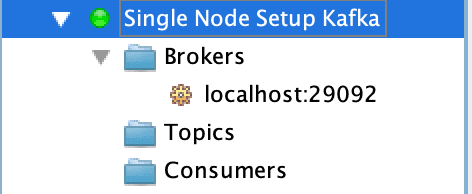

Finally, we should be able to visualize the connection on the left sidebar:

最后,我们应该能够在左边的侧边栏上直观地看到连接。

As such, the entries for Topics and Consumers are empty because it’s a new setup. Once the topics are created, we should be able to visualize data across partitions. Moreover, if there are active consumers connected to our Kafka server, we can view their details too.

因此,主题和消费者的条目是空的,因为它是一个新的设置。一旦创建了主题,我们就应该能够跨分区可视化数据了。此外,如果有活跃的消费者连接到我们的Kafka服务器,我们也可以查看他们的细节。

3. Kafka Cluster Setup

3.Kafka集群设置

For more stable environments, we’ll need a resilient setup. Let’s extend our docker-compose.yml file to create a multi-node Kafka cluster setup.

对于更稳定的环境,我们将需要一个有弹性的设置。让我们扩展我们的docker-compose.yml文件来创建一个多节点的Kafka集群设置。

3.1. docker-compose.yml Configuration

3.1.docker-compose.yml 配置

A cluster setup for Apache Kafka needs to have redundancy for both Zookeeper servers and the Kafka servers.

Apache Kafka的集群设置需要为Zookeeper服务器和Kafka服务器提供冗余。

So, let’s add configuration for one more node each for Zookeeper and Kafka services:

因此,让我们为Zookeeper和Kafka服务各增加一个节点的配置。

---

version: '2'

services:

zookeeper-1:

image: confluentinc/cp-zookeeper:latest

environment:

ZOOKEEPER_CLIENT_PORT: 2181

ZOOKEEPER_TICK_TIME: 2000

ports:

- 22181:2181

zookeeper-2:

image: confluentinc/cp-zookeeper:latest

environment:

ZOOKEEPER_CLIENT_PORT: 2181

ZOOKEEPER_TICK_TIME: 2000

ports:

- 32181:2181

kafka-1:

image: confluentinc/cp-kafka:latest

depends_on:

- zookeeper-1

- zookeeper-2

ports:

- 29092:29092

environment:

KAFKA_BROKER_ID: 1

KAFKA_ZOOKEEPER_CONNECT: zookeeper-1:2181,zookeeper-2:2181

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://kafka-1:9092,PLAINTEXT_HOST://localhost:29092

KAFKA_LISTENER_SECURITY_PROTOCOL_MAP: PLAINTEXT:PLAINTEXT,PLAINTEXT_HOST:PLAINTEXT

KAFKA_INTER_BROKER_LISTENER_NAME: PLAINTEXT

KAFKA_OFFSETS_TOPIC_REPLICATION_FACTOR: 1

kafka-2:

image: confluentinc/cp-kafka:latest

depends_on:

- zookeeper-1

- zookeeper-2

ports:

- 39092:39092

environment:

KAFKA_BROKER_ID: 2

KAFKA_ZOOKEEPER_CONNECT: zookeeper-1:2181,zookeeper-2:2181

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://kafka-2:9092,PLAINTEXT_HOST://localhost:39092

KAFKA_LISTENER_SECURITY_PROTOCOL_MAP: PLAINTEXT:PLAINTEXT,PLAINTEXT_HOST:PLAINTEXT

KAFKA_INTER_BROKER_LISTENER_NAME: PLAINTEXT

KAFKA_OFFSETS_TOPIC_REPLICATION_FACTOR: 1

We must ensure that the service names and KAFKA_BROKER_ID are unique across the services.

我们必须确保服务名称和KAFKA_BROKER_ID在整个服务中是唯一的。

Moreover, each service must expose a unique port to the host machine. Although zookeeper-1 and zookeeper-2 are listening on port 2181, they’re exposing it to the host via ports 22181 and 32181, respectively. The same logic applies for the kafka-1 and kafka-2 services, where they’ll be listening on ports 29092 and 39092, respectively.

此外,每个服务都必须向主机暴露一个独特的端口。尽管zookeeper-1和zookeeper-2正在监听端口2181,它们分别通过端口22181和32181向主机暴露它。同样的逻辑适用于kafka-1和kafka-2服务,它们将分别监听29092和39092端口。

3.2. Start the Kafka Cluster

3.2.启动Kafka集群

Let’s spin up the cluster by using the docker-compose command:

让我们通过使用docker-compose命令来启动集群。

$ docker-compose up -d

Creating network "kafka_default" with the default driver

Creating kafka_zookeeper-1_1 ... done

Creating kafka_zookeeper-2_1 ... done

Creating kafka_kafka-2_1 ... done

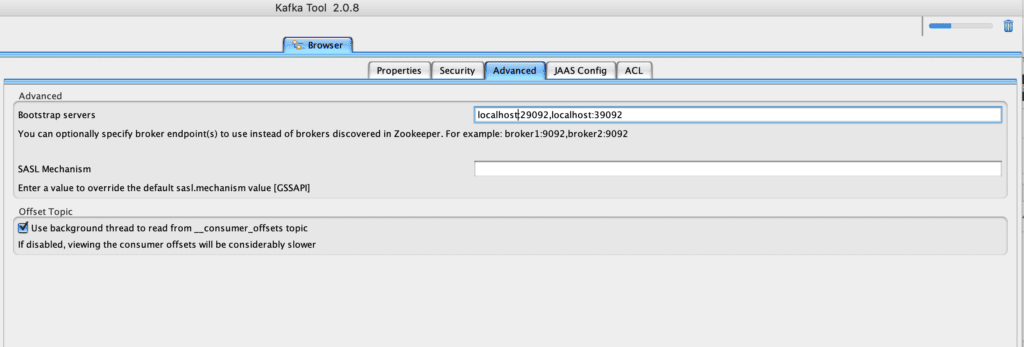

Creating kafka_kafka-1_1 ... doneOnce the cluster is up, let’s use the Kafka Tool to connect to the cluster by specifying comma-separated values for the Kafka servers and respective ports:

一旦集群建立起来,让我们使用Kafka工具,通过指定Kafka服务器和各自的端口的逗号分隔的值来连接到集群。

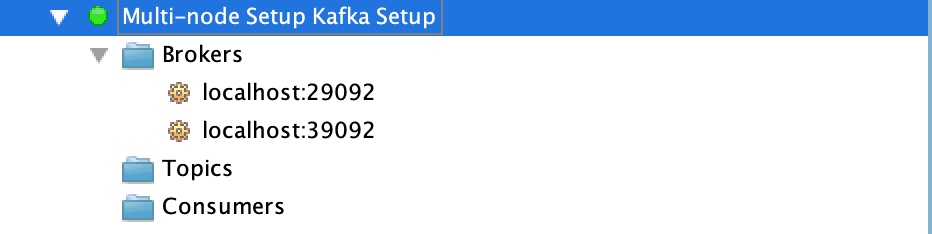

Finally, let’s take a look at the multiple broker nodes available in the cluster:

最后,让我们看一下集群中可用的多个代理节点。

4. Conclusion

4.总结

In this article, we used the Docker technology to create single node and multi-node setups of Apache Kafka.

在这篇文章中,我们使用Docker技术来创建Apache Kafka的单节点和多节点设置。

We also used the Kafka Tool to connect and visualize the configured broker server details.

我们还使用了Kafka工具来连接和可视化配置的经纪人服务器细节。